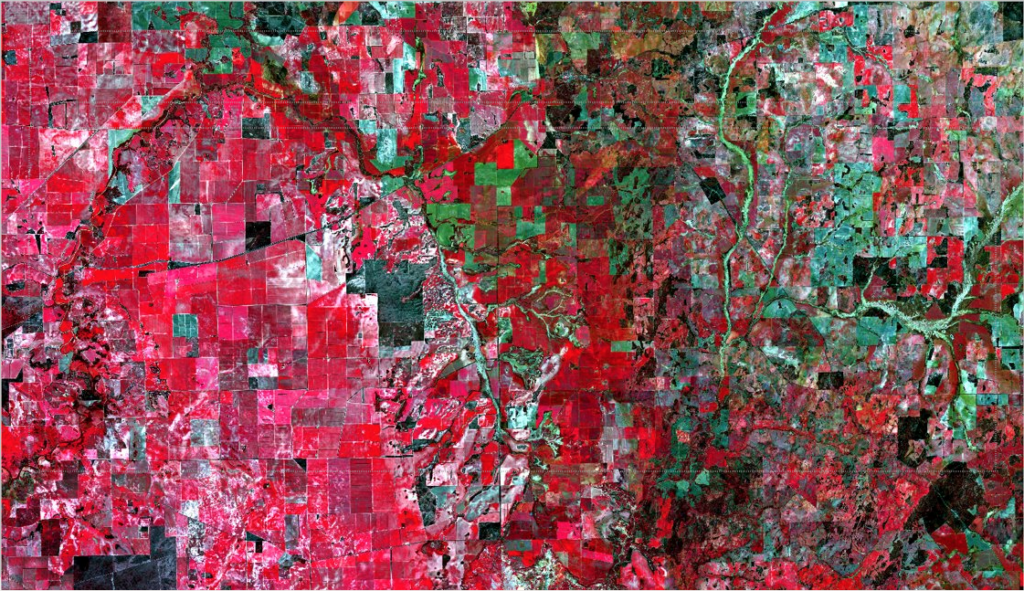

Satellite image of paddocks in Western Australia overlaid with boundaries as identified with our new farm mapping software. Credit: Copernicus Sentinel Data.

If we take a single, cloud-free image of Australia’s grain zone from the European Space Agency’s Sentinel-2 satellites and apply some super fancy science – we can identify the boundaries of Australian paddocks from space.

Our researchers, Dr Franz Waldner and Dr Foivos Diakogiannis, created this revolutionary product, ePaddocks.

Unlike property boundaries, which are recorded in local council or title records, paddock boundaries aren’t recorded anywhere. Currently, farmers need to draw boundaries in different software platforms for each service they use. This includes satellite-assisted fertiliser applications or crop growth monitoring. They may have to update this information every growing season. But a way of making their paddock boundaries already available will save them a lot of time. Enter our new paddock mapping software.

ePaddocks can identify paddock boundaries from season-to-season. They use satellite images that are available for anyone to download for free. But they are hard to get, store and analyse for most people. So, it’s mainly researchers who use them.

And fun fact – producing ePaddocks would have taken more than 1231 days (or three years!) on a high-end laptop. We did it in less than a fortnight thanks to our high-performance computing systems.

How do we see the boundaries?

We use satellite images in the RGB and infrared spectrum. This means the farm mapping software shows images in red, green, blue and infrared.

Infrared is informative when it comes to vegetation and paddocks. Plants and vegetation don’t absorb much of it but reflect it back into space. Different plants, at different stages of growth, reflect different amounts. So, a crop of wheat that was sown a month ago in a paddock will reflect infrared differently to a paddock of canola sown three months ago.

This tech focuses on identifying the tiny spaces between crop areas under consistent management to find boundaries. You can see these fine lines with your naked eye in the image below, but it needs science to do that automatically. Franz and Foivos thought up some very cool science to do that.

The boundary between art and science. Crops (the red area on the left), rivers (in the centre area), bushland (black) and less vegetated areas (green on the right) in 74×54 kms of Western Australia. Credit: European Space Agency.

Teaching the system

To make sense of the images, Franz and Foivos used a state-of-the-art deep neural network. Franz said the incredible power of deep neural networks is their ability to learn to perform specific tasks.

“A neural network can’t do anything until you teach it to do something. In a way, it’s like a child. The tasks you get a neural network to do are so complex you can’t define explicit equations to then ‘program’ it,” Franz said.

“So, you show it lots of examples, at different scales, until it can make sense of something new. By giving feedback to a neural network or a ‘child’, it updates the way it processes information to perform the task better.”

They then take the images and apply the deep neural network and algorithms to produce the paddock boundaries based on characteristics in the light spectrum and land features.

“Our method only needs one satellite image taken at any point in the growing season to distinguish the boundaries. It relies on data driven processes and decisions rather than assumptions about what’s on the ground. But most importantly, the output is highly accurate, detailed and available at the touch of a button,” Franz said.

“Paddock boundaries have been highly sought after in the digital agriculture world for a little while now, but we’ve tackled it over the past year or so with new technologies and solved it. Our ePaddocks product will set the standard for similar geo-spatial products.

“This is not a new topic but the science we’ve deployed is completely new,” he said.

Check out some of our other agtech products.

2nd September 2021 at 5:00 pm

Hello

We (Spatial7) provides GIS and mapping (spatial datasets) creating maps, data preparation, data management, spatial analysis and build GIS management systems.

Agribusiness (paddock, farms, cultivated land, horticulture and soil data) management into spatial database systems.

How we can we get work from CSIRO and potential business opportunity?

Please assist. Thank you for your time.

Regards

Kris

30th August 2020 at 12:07 am

Everything csiro does is scienced based and to the good of the public. That was their mission. But ?

27th August 2020 at 8:17 am

Sounds very useful. How do I access the software?

27th August 2020 at 9:17 am

Hi Don, thanks for your comment. ePaddocks is part of our Digiscape Future Science Platform. For more information on this product please contact our Digiscape Leader, Dr Andrew Moore, on andrew.moore@csiro.au.

Thanks,

Team CSIRO